AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

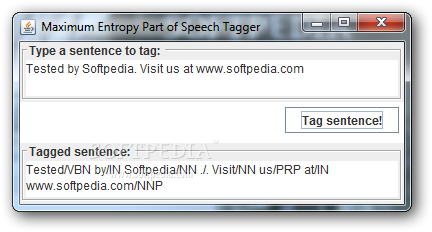

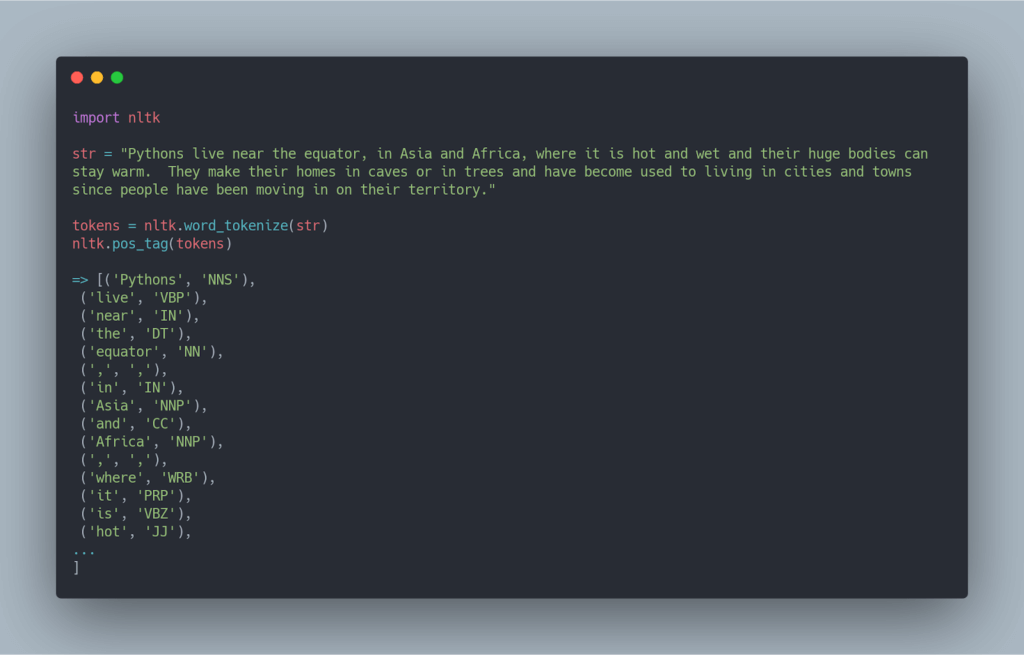

Based on this scheme, we implement a concrete model which utilizes probabilistic n-gram language models. In this work we introduce a novel generic distributional similarity scheme under which the power of probabilistic models can be leveraged to effectively model joint contexts. Most traditional distributional similarity models fail to capture syntagmatic patterns that group together multiple word features within the same joint context. In the Proceedings of the Eighteenth Conference on Computational Natural Language Learning (CoNLL-2014). Oren Melamud, Ido Dagan, Jacob Goldberger, Idan Szpektor, and Deniz Yuret. The framework is consistent in improving the baseline systems across languages and achieves state-of-the-art results in multilingual dependency parsing. The results show that the examined method achieves as good as or better results compared to the other word embeddings. I examine the framework in a multilingual setup as well. I compared word embeddings, including more recent representations, in Named Entity Recognition (NER), Chunking, and Dependency Parsing. The similarity of contexts is measured by the distribution of substitutes that can fill them. The framework maps words on a sphere such that words co-occurring in similar contexts lie closely. In this work, I analyze a word embedding method in supervised Natural Language Processing (NLP) tasks. These representations are shown to be successful across NLP tasks including Named Entity Recognition, Part-of-speech Tagging, Parsing, and Semantic Role Labeling. Word embeddings address the issues of the classical categorical representation of words by capturing syntactic and semantic information of words in the dimensions of a vector. Inducing low-dimensional, continuous, dense word vectors, or word embeddings, have become the principal technique to find representations for words. One of the interests of the Natural Language Processing (NLP) community is to find representations for lexical items using large amount of unlabeled data. ( PDF, Presentation, word vectors (github), word vectors (dropbox)) Koç University, Department of Computer Engineering. Thesis: Analysis of SCODE Word Embeddings based on Substitute Distributions in Supervised Tasks. System are available for download for further experiments.Ĭurrent position: PhD student, Carnegie Mellon University, Pittsburgh ( LinkedIn). The vector representations for words used in our Word or instance based systems on 15 out of 19 corpora in 15 Significantly better than or comparable to the best published On multilingual experiments our results are State-of-the-art (80%), while on highly ambiguous words it is up Many-to-one accuracy of the system is within 1% of the Our main contribution is to show that an instance based model canĪchieve significantly higher accuracy on ambiguous words at theĬost of a slight degradation on unambiguous ones, maintaining aĬomparable overall accuracy. Modeling them correctly may negatively affect upstream tasks. However it is important to modelĪmbiguity because most frequent words are ambiguous and not Running text are used in their most frequent class (e.g. Overall accuracy in part-of-speech tagging because most words in Instance based model does not lead to significant gains in The target word and probable substitutes sampled from an n-gram Represented by a feature vector that combines information from The art word (type) based part-of-speech induction system We develop an instance (token) based extension of the state of

Up to date versions of the code can be found at github.) This is a token based and multilingual extension of our EMNLP 2012 model. Mehmet Ali Yatbaz, Enis Sert, Deniz Yuret.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed